How to configure your first crawler

Running your first crawl takes a couple of minutes. The default configuration extracts some common attributes, such as a title, a description, headers, and images. Change and optimize this for your website:

- The default extractor might not work as-is with your website if the data isn’t formatted the same way.

- Extract more content so your users can discover it.

The Crawler quickstart will help you understand how the configuration file works. It includes several tips on building and optimizing a crawler.

What’s the Crawler configuration API?

To edit and test your crawler’s configuration, use the editor in the Crawler Admin.

Configuring the entry points

startUrls

Giving the correct entry points to the crawler is crucial. The default configuration has the base URL set when creating a crawler. Running the crawler as-is displays some discovered pages. All the discovered pages aren’t displayed because the homepage of the Algolia blog displays the most recent articles. The See More button at the bottom of the page dynamically loads more posts.

The Crawler can’t interact with web pages. For that reason, it’s limited to the first set of articles displayed on the homepage.

The best solution in this case is to rely on a sitemap.

sitemaps

Check to see if the site has a sitemap. Sitemaps are specifically intended for web crawlers, they give a complete list of all relevant pages it needs to browse. When using a sitemap, you’re sure that your crawler is capturing all URLs.

The location of the sitemap is in the robots.txt file. This is a standard file located at the root level of a website and informs the web crawlers which areas of the website they’re allowed to visit.

Sitemaps go to the root level of the website too, in a file named sitemap.xml.

Remove the startUrls and instead add the Algolia blog sitemap to your configuration:

1

2

3

4

5

new Crawler({

- startUrls: ["https://blog.algolia.com/"],

+ sitemaps: ["https://blog.algolia.com/sitemap.xml"],

// ...

});

Add this sitemap to your crawler configuration and run a new crawl. Several hundred records will be displayed.

Finding data on web pages

After indexing your blog pages, look at putting more data into your generated records, to improve discoverability.

CSS selectors

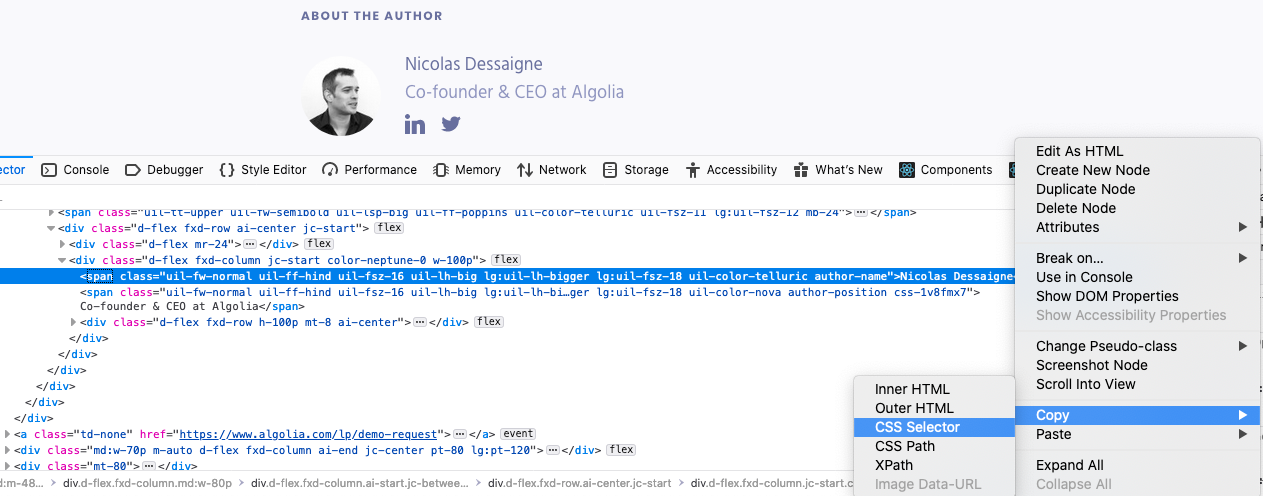

The main way to extract data on a web page is by using a CSS selector. To find the correct one, browse the URLs you want to crawl and scroll to the information you want to extract. To get the author of each article:

- Right-click the text to extract and select Inspect Element (or Inspect).

- In the browser console, right-click the highlighted HTML node and select Copy > CSS Selector

Depending on the browser, the generated selector might be complex and may be incompatible with Cheerio.

If that’s the case, you must create it yourself.

The selector must be common to all the pages visited by this recordExtractor.

Use the URL tester to check the result.

With Firefox, you get the following selector: .author-name.

-

Add the following field to the record returned by the

recordExtractormethod:Copy1

author: $(".author-name").text(),

-

With the URL tester, run a test for one of the blog articles (for example,

https://blog.algolia.com/algolia-series-c-2019-funding/).

In the logs, see the following generated record:

1

author: ""

This means the author field wasn’t retrieved accurately.

Debugging selectors

Debugging the selector with the console

Use the browser console to debug a selector.

The recordExtractor lets you use console.log and displays the output in the Logs tab of the URL tester.

1

console.log($(".author-name").text());

In your browser

To understand why the Crawler can’t extract the information, use the browser’s developer tools. Open the API console:

- In Firefox: Tools > Web Developer > Web Console

- In Chrome: View > Developer > JavaScript Console

Test your selector using the DOM API.

Retrieve the HTML element containing the author’s name.

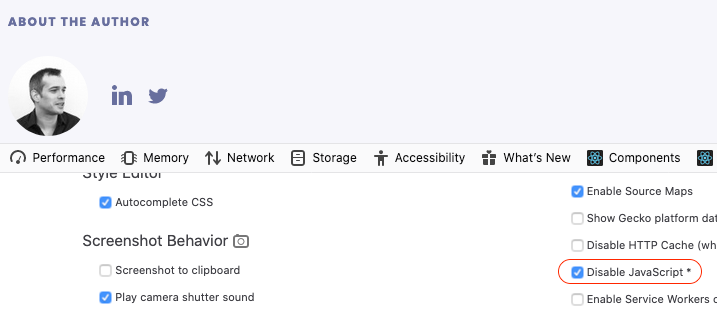

Check if JavaScript is enabled

The difference between what you see in a browser and what the Crawler sees often comes from the fact that JavaScript isn’t enabled by default on the crawler. JavaScript makes websites slower and consumes more resources, so it’s up to you to enable it on your crawler.

To display your page without JavaScript:

- In the browser console, click the three dots on the right and select Settings

- Find the

Disable JavaScriptcheckbox and tick it.- If you’re using Chrome, reload the page.

Now look at the author section: the author’s name is missing.

This is why the Crawler can’t extract it: your blog articles need JavaScript to display all the information.

To fix that, enable JavaScript in your crawler for all blog entries with the renderJavaScript option:

1

2

3

new Crawler({

+ renderJavaScript: ['https://blog.algolia.com/*'],

});

When running a new test on the same blog article, the author field will now populate as expected.

Meta tags are often a better way to get that information without enabling JavaScript.

Meta tags

Websites often include useful information in their meta tags, so they can index well in search engines and social media:

- Standard HTML meta tags.

- Open Graph is a protocol originally developed by Facebook to let people share web pages on social media. The information it exposes is clean and expected to be searchable. If the website has Open Graph tags, you should use them.

- Twitter Card Tags, which is like Open Graph but specific to X (Twitter).

Look at some other meta tags that the Algolia blog exposes.

Do this either on the Inspector tab of your browser’s developer tools or by right-clicking anywhere on the page and selecting View Page Source.

1

2

3

4

<meta content="Algolia | Onward! Announcing Algolia's $110 Million in New Funding" property="og:title"/>

<meta content="Today, we announced our latest round of funding of $110 million with our lead investor Accel and new investor Salesforce Ventures along with many others. In" name="description"/>

<meta content="https://blog-api.algolia.com/wp-content/uploads/2019/10/09-2019_Serie-C-announcement-01-2.png" property="og:image"/>

<meta content="Nicolas Dessaigne" name="author"/>

This information is directly readable without JavaScript.

The default configuration already includes some of them.

Remove the renderJavaScript option and update your configuration to get the author using this tag:

1

$("meta[name=author]").attr("content"),

$ is a Cheerio instance that lets you manipulate crawled data.

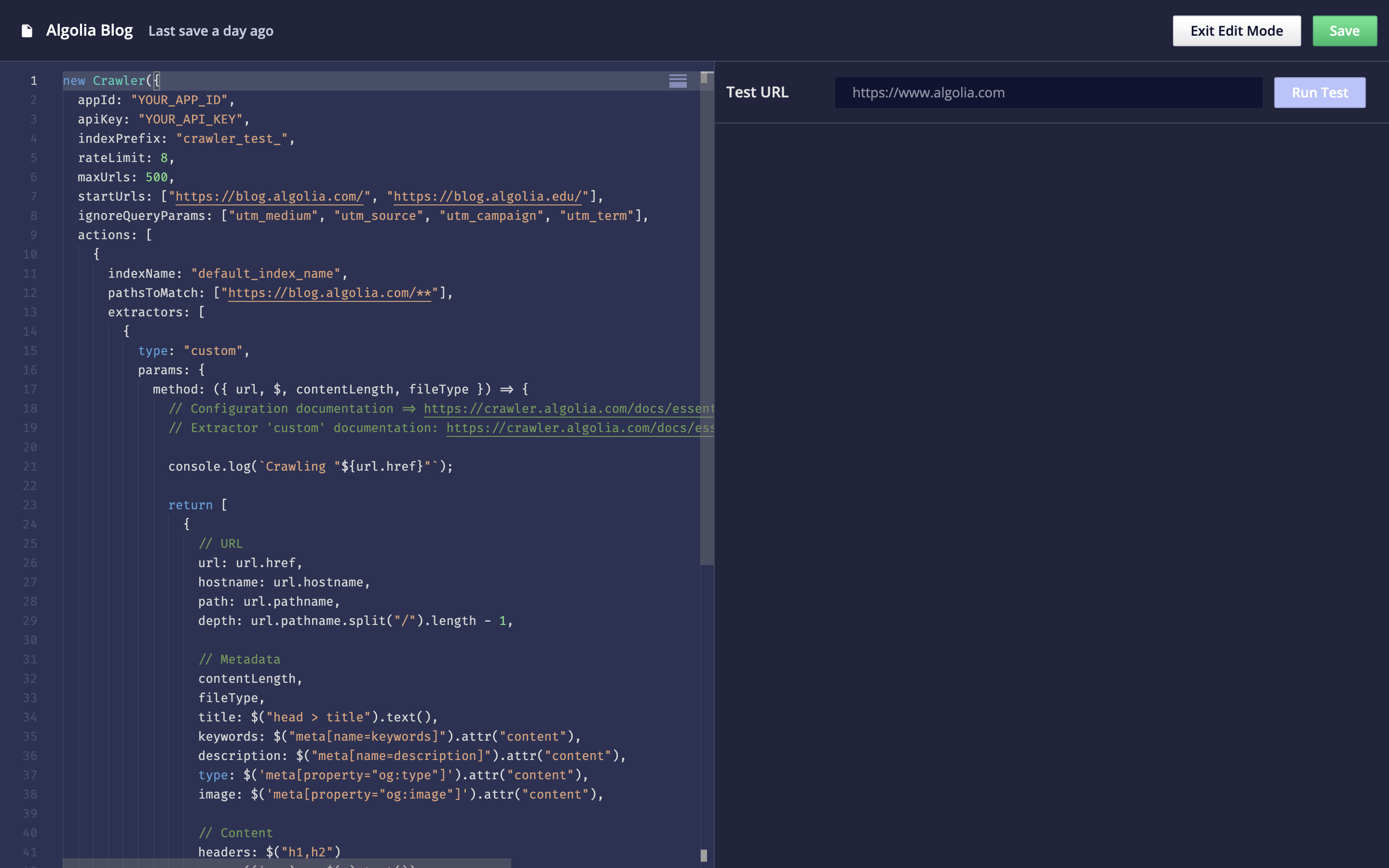

Improved configuration

The improved blog configuration now looks like the following:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

new Crawler({

appId: "YOUR_APP_ID",

apiKey: "YOUR_API_KEY",

indexPrefix: "crawler_",

rateLimit: 8,

sitemaps: ["https://blog.algolia.com/sitemap.xml"],

ignoreQueryParams: ["utm_medium", "utm_source", "utm_campaign", "utm_term"],

actions: [

{

indexName: "algolia_blog",

pathsToMatch: ["https://blog.algolia.com/**"],

recordExtractor: ({ url, $, contentLength, fileType }) => {

return [

{

// The URL of the page

url: url.href,

// The metadata

title: $('meta[property="og:title"]').attr("content"),

author: $('meta[name="author"]').attr("content"),

image: $('meta[property="og:image"]').attr("content"),

keywords: $("meta[name=keywords]").attr("content"),

description: $("meta[name=description]").attr("content"),

}

];

}

}

],

initialIndexSettings: {

algolia_blog: {

searchableAttributes: ["title", "author", "description"],

customRanking: ["desc(date)"],

attributesForFaceting: ["author"]

}

}

});

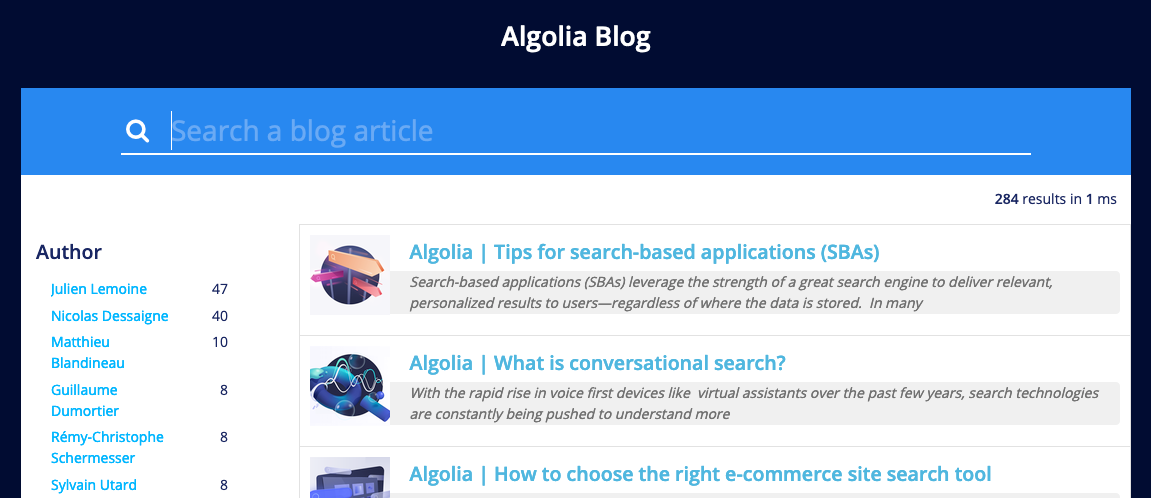

Next steps

Having the author enables you to add faceting to your search implementation.

Useful next steps could be:

- Index the article content to improve its discoverability.

- Index data that lets you add custom ranking, such as the publish date of the article

- Index Google Analytics data to boost the most popular articles.

- Index more information about the author, such as their picture and job title.

- Set up a

scheduleto have the blog regularly crawled.

Configurations examples

To get a better sense of how to use and format crawler configurations, check out the GitHub repository of sample configuration files.